As I am sure you have noticed, the term “artificial intelligence” is all up in the news these days, and for a bewildering variety of reasons. It’s causing students to celebrate and workers to go on strike. It’s getting the Beatles back together. It’s falling in love with reporters from The New York Times. It’s doing improvised comedy. It’s threatening humanity with extinction.

But all this news can be difficult to parse without an understanding of some basic contextual details — details that the mainstream media typically skimp on. Moreover, there is a lot of wild hype out there passing as serious commentary. Plus… well, this stuff is complicated. Some of us are getting old.

So here I have attempted to clarify the issue for those who fear that they may be falling behind. And I have done so, you will be pleased to know, in the same way I always have: by typing out each and every word you are about to read with my own two fingers.

You can call me “AI”

First it must be said that, strictly speaking, the term “AI” is still totally inappropriate to describe any existing technology. It is a buzzword, a flim-flammy marketing term, an aspiration, a dream which may or may not someday become reality. For better or worse, it has become a term people use to describe any machines that appear to do some thinking — the Nintendo I played growing up back in the 80’s often pitted me against an “AI”, for instance — but it is important to be clear: there is nothing truly intelligent about these machines. At least, not yet.

This loosey-goosey use of the term “AI” puts the onus on people to specify whether they are talking about something like a Nintendo AI or something more deserving of the name — something that, for all intents and purposes, has a conscience, like Skynet or Commander Data. To this end, the terms “weak AI” and “strong AI” are often used, with the distinction usually being made on the basis that the former can only do one thing (eg., play Tecmo Bowl) whereas the latter would, if it existed, be able to apply itself to many different tasks, like humans and other intelligent animals can. These are not well-defined categories, however; the line that divides them is fairly arbitrary and the criteria that a strong AI must meet is the subject of ongoing debate. Most authorities on the subject would probably point to the Turing Test as the ultimate dividing line, despite imperfections in this test. But, again, this debate is still purely academic: despite numerous reports that the Turing Test has been passed, as far as I can tell these are all greatly exaggerated.

Although strong AI exists only in science fiction, however, weak AIs are getting better and better all the time, and recently they have made some significant strides — see programs like ChatGTP from OpenAI (in partnership with Microsoft), Bard from Google (or Alphabet), and LlaMA from Facebook (or Meta). This latest generation of AI, despite being weak, is simultaneously wowing a lot of people and making a lot of people do a lot of worrying. So what separates them from preceding generations of AI?

It’s in his chips

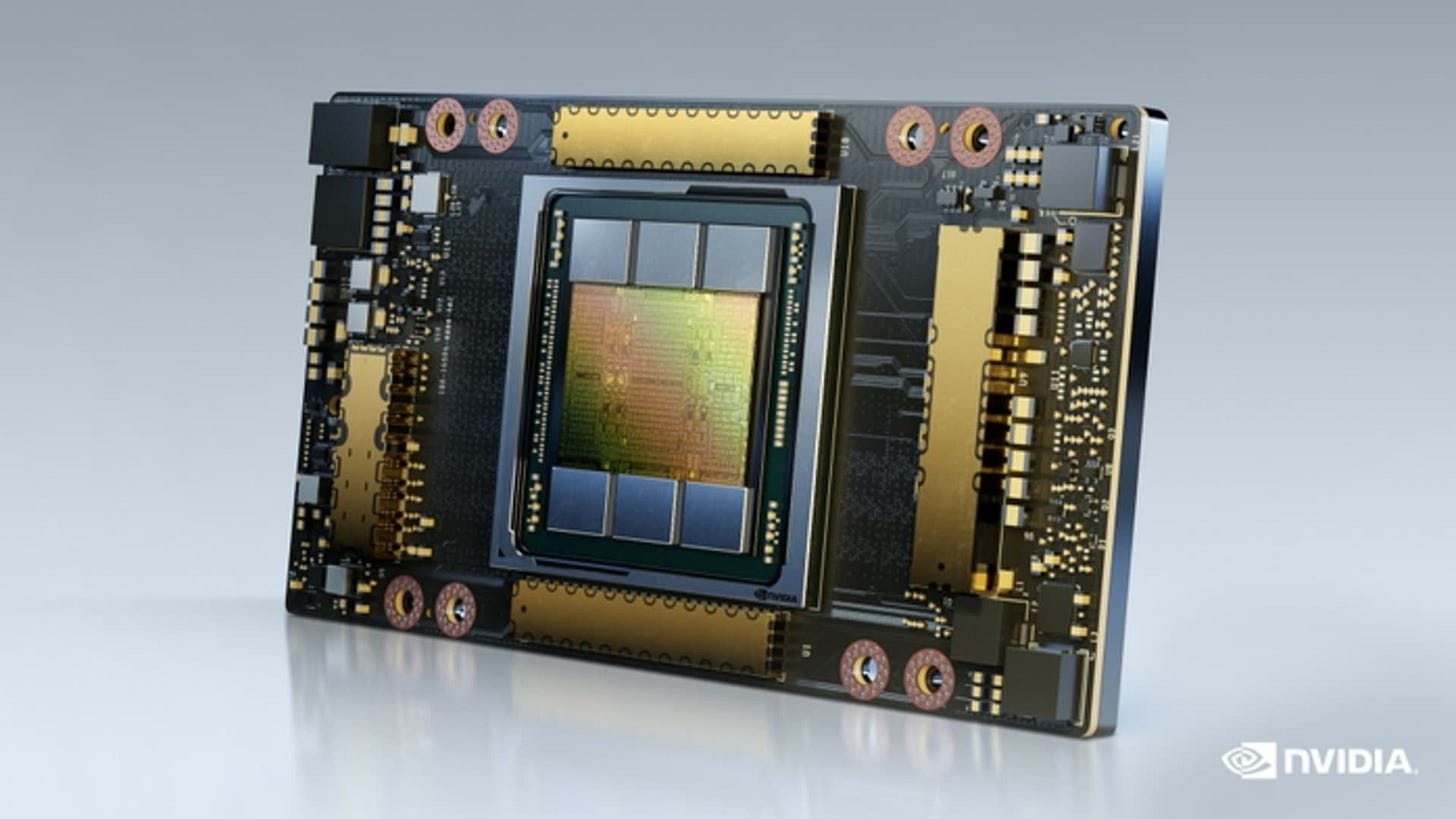

The difference boils down to recent advances in hardware — specifically, in computer chips (or “chips”, as the kids like to say), or, to be still more specific, chips called Graphics Processing Units (GPUs).

Unlike CPUs (Central Processing Units), which are kind of general-purpose chips usually associated with Intel (until recently the world’s largest chip maker), GPUs are more specialized, having evolved to service the needs of that great mother of invention, the video game industry. To more efficiently process video game graphics, GPUs are designed to do multiple smaller tasks simultaneously or “in parallel”. And in 2006, scientists at Stanford University discovered that this quality made GPUs perfectly suited to another task: namely, performing large calculations that are called “embarrassingly parallel” on account of the fact that that they can be broken down into many different smaller constituent calculations. By using GPUs to run these parallel calculations in parallel, the researchers found, these otherwise time-consuming calculations could be accelerated by factors of magnitude — by tens or thousands of times. And this kind of “off-label” use of GPUs quickly grew as people realized its potential and began using GPUs for various practical purposes — particularly for cryptocurrency mining, and for training and applying AI.

These chips make all the difference between something like a Nintendo AI and ChatGPT. While the former can only follow a strict, unchanging, and manually programmed set of rules, thanks to GPUs the latter consists of something called a “neural network” that can be trained by machine learning algorithms. And thanks, in turn, to these neural networks, modern AI has become much more flexible, powerful, and apparently intelligent.

So central are GPUs to the current AI boom that state-of-the-art GPUs currently sell for around $10,000 each, and the leading GPU maker — a company called Nvidia that invested early on in making its chips programmable and thus more widely applicable — was recently valued at over $1 trillion, a feat only ever accomplished by a few companies: Apple, Amazon, Alphabet, and Microsoft.1 (Nvidia’s next closet competitor in this area, a company called AMD, is a distant second, at a paltry $186.5 billion.)

Another consequence of their importance to modern AI systems is that GPUs have become a crucial flashpoint in the new cold war, with the US imposing severe restrictions on China’s ability to acquire them.2 And this tactic does seem to be having a major negative impact on the Chinese AI industry: see the increasingly notable absence of a Chinese answer to ChatGPT — a program that, much to Nvidia’s delight, has apparently required around 30,000 of these $10,000 video game chips to train and run.

Why ChatGPT can’t write

So what can a neural network containing $300 million worth of GPUs do? ChatGPT is what is called a “large language model”, which mean that it has been trained on such a large volume of written text that it appears to be able to produce original writing in response to prompts. Its training consisted of a huge snapshot of the internet that included academic articles, blog posts, instruction manuals, Kickstarter pages, news media, Reddit threads, romance novels, and probably also things like personal emails — whatever its creators at OpenAI could get their hands on, basically, since the whole premise of large language models is that more training makes them better.

This is because what large language models do over the course of their training is build a statistical representation of human language. Essentially they try to learn the probability that a human would use this or that word or phrase given those that preceded them. The bigger the data set, the thinking goes, the more accurate these probabilities will be, letting the AI more convincingly emulate human writing.

In other words, if you gave ChatGPT the prompt “Two Muslims walked into a...”, it would first determine the likelihood of other words and phrases following that prompt (based on its training), and then it would use this determination to complete the sentence (and then the paragraph, and so on).

ChatGPT’s big trick, however, is that it does not always choose the most likely words or phrases. That, apparently, would lead to a lack of variety in its responses and would come across as too “machine-like” and inhuman. To solve this problem, ChatGPT switches things up a bit, choosing a highly likely word here and a less likely word there. So sometimes it might respond (as random examples) that “Two Muslims walked into a mosque”, other times that “Two Muslims walked into a bar”, and other times that “Two Muslims walked into a wall”, depending on whether a more or less frequently-used word was chosen to complete the sentence. By riding this line between more and less frequently-used words and phrases, ChatGPT essentially remixes bits of the internet text it has learned into something unique, thus mimicking — to some extent, at least — human creativity and originality.

(Models similar to ChatGPT that appear to generate original music or visual art work in a similar way.)

Indeed, this probability-based text generation is often sufficient to fool human observers into thinking that they are witnessing complex, intelligent behavior. Once you know how it works, however, its limitations should start to become obvious.

Perhaps first and foremost: ChatGPT cannot actually write, since writing involves more than simply choosing words or phrases based on their probability of appearing in other works. It can simulate writing — and this has profound implications for the writing profession (as the currently-striking Writers Guild of America will tell you). But writers will never be completely replaced by large language models because of this inherent limitation.

For another thing, as you might imagine, ChatGPT’s training imbued it with a lot of language and biases that might be deemed offensive. In fact the prompt “Two Muslims walk into a...” was recently used by researchers at Stanford University to show that ChatGPT indeed has an anti-Muslim bias, with 66 percent of completions of this prompt being deemed violent compared to just 8 percent for “Two Jews walk into a...”.

And this is despite much effort having been expended to scrub untoward content from the system. Apparently, OpenAI got workers in Kenya to play this potentially traumatizing role in ChatGPT’s creation — paying them less than two dollars an hour to do so.

Artificial artificial intelligence

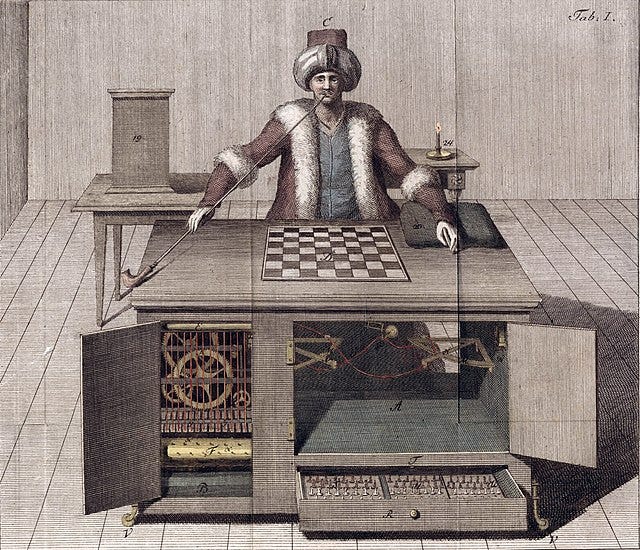

This brings up the larger and seldom-appreciated issue of the human labor that AIs like ChatGPT are — despite appearances — still utterly reliant on. In fact, this limitation is so severe that I think modern AI systems can be compared to the legendary Mechanical Turk — a chess-playing machine that was invented way back in 1770. This machine could not only choose its moves, but it could move the chess pieces, detect cheating, and apparently even respond to questions. The Mechanical Turk made a huge splash at the time, as you might expect; and it was carted around to the various royal courts across Europe, famously even playing and beating Napoleon Bonaparte.

But the reason this machine seemed so amazing, it transpired, was that a person could hide inside it and control its moves while remaining invisible. Basically, the Mechanical Turk was artificial artificial intelligence.

Our AI systems today are not entirely different. Not only, as we have seen, is ChatpGPT just remixing content that people originally produced (and there are some court battles going on over this), and not only are people needed to make it polite, but it also requires countless other “ghost workers” to constantly perform menial tasks, effectively babysitting it and trying to make it behave properly. Tellingly, Amazon has an online job marketplace that is actually called “Mechanical Turk”, where AI and other tech companies can outsource such menial tasks. Basically, companies can post a coding job (for example) on this marketplace, often for sub-minimum wage pay, and workers anywhere in the world can choose to perform it. This provides tech companies with a 24/7 workforce that Jeff Bezos himself long ago characterized as “artificial artificial intelligence”.

Thus, while we are bamboozled by a facade of AI, like Napoleon before us, invisible humans toil away to make the thing work. Sometimes so-called “AI” companies even just straight-up employ people to perform tasks while posing as AI, obliging consumers to take measures to ensure they are getting what they paid for.

Of course, I cannot predict how AI technology will progress and to what degree it will eventually overcome its current limitations. But it is worth taking note of these limitations as they currently stand — especially when contemplating the broader, socioeconomic implications of this technology, to which I will turn next.

Don’t believe the hype

Seemingly authoritative opinions about the implications of AI range anywhere from that of tech billionaire Marc Andreessen, according to whom “AI Will Save the World”, to that of tech billionaire (and OpenAI CEO) Sam Altman, who recently signed a letter warning that AI could annihilate humanity. But neither of these wild and self-serving claims should be taken seriously: as we have seen, AI is still far too limited to do either of these things. So what can we realistically expect from AI?

The answer to this question, I think, is most readily seen through the lens of class war — a perspective typically absent from both the analysis of billionaires as well as that of the mainstream media. Because, unfortunately — and contrary to Andreessen’s laughable claim that AI is “owned by people and controlled by people, like any other technology” — it is in fact firmly in the hands of public enemy number one: the ruling capitalist elite.3 Indeed, while it is impossible to imagine AI either solving climate change or coming to overshadow it, no imagination is necessary to see examples of AI being used to further oppress poor and working people — it is already happening:

Employers are already rushing headlong to replace workers with AI, using it to extend the relentless march of automation. See the US eating disorder helpline that recently replaced its staff with an AI only to quickly discontinue it after finding that it gave callers harmful advice.

Landlords are already using AI-powered tenant screening apps to filter out “undesirable” renters — not to mention installing AI-powered facial recognition entry systems over the protests of their tenants.

Intelligence and law enforcement agencies are already rushing to use AI-powered facial recognition apps to make their job of harassing, surveilling, and incarcerating people easier. See Randal Reid, the young Black man who recently lost thousands of dollars and spent nearly a week in an Atlanta jail after being thus misidentified as a suspect-at-large.

Militaries are rushing to use it to improve their capabilities. See the Israeli army, which has installed AI-targeted machine guns at Palestinian checkpoints.

Corporations and scam artists are rushing to use AI to take advantage of, among other things, the vast quantity of deeply personal information about all of us that — due to a massive regulatory failure of capitalist state governments — has been collected for years via the internet and is now openly commercially available.

And so on.

These are the issues that AI is currently forcing society to contend with, and these are the trends that seem most likely to continue. And in these ways, yes, I think AI really is a dire threat to humanity — at least, to the part of it that is poor and working class.4

But this threat is not inherent to the awesome capabilities of AI, as Sam Altman likes to imply. Rather, it is the cruel nature of the class society in which AI was conceived and currently exists. It is not AI deciding that all the workers it replaces should therefore stop being paid, for example: that decision is made by employers.

Likewise, it is not AI that is threatening humanity with extinction, but capitalism. And this is a threat from which no technology can “save the world” — only the revolution can do that.

All of which, incidentally, are companies that are also riding high on the current AI boom.

Despite the latter’s proximity to the world’s leading chip manufacturers, Taiwan-based TSMC and South Korea-based Samsung.

Capitalists don’t control AI entirely, of course: Meta’s large language model, LlaMA, recently leaked online, so — until just a few days ago — anyone with a decent laptop and an internet connection could download this cutting-edge AI and train it for a specific task. However, although LlamMA is still available online, but it is no longer cutting-edge: on July 18th, Meta unveiled LlaMA 2.

The fact that AI is also enabling more poor and working students to game the for-profit credentialing system hardly makes up for all of this.

Once you see how our income-based laborforce really works (the fact that high profits depend on low wages), then you’ll finally understand why a digital system matching people to jobs, resources to communities, and daily production, consumption, and waste management operations to personal and professional demands is actually more sustainable and ethical than today’s global political economy, mainly because, compared to scientific-capitalism, scientific-socialism is a lot more democratic; it values and views our very basic, very intuitive belief “universal protections for all” as both a human need and an environmental right.